Engineering FINEST Outcomes...

Experience the delight of crafting AI powered digital solutions that can transform your business with personalized outcomes.

Start with

WHY?Discover some of the pivotal decisions you have to make for the future of your business.

Why Choose Digital?

Business transformation starts with Digital transformation

Launch

Launch a Minimum Viable Product within 60-90 days. Quickly validate ideas with core features.

Scale

Develop scalable SaaS platforms with user management, subscriptions, analytics, and more.

Automate

Implement AI-powered agents to enhance user experience, automate tasks, and boost efficiency.

Audit

Perform a detailed system audit to find risks, inefficiencies, and areas for improvement.

Consult

Get expert consulting to define product strategy, architecture, and a clear growth path.

Unlock your real potential with technology

solutions crafted to fit your exact needs—

Your Growth, Your Way

Why Choose Digital?

Business transformation starts with

Digital transformation

What We Offer

Unlock your business potential with technology solutions crafted to fit your exact needs — Your Growth, Your Way.

Launch

Launch a Minimum Viable Product within 60-90 days. Quickly validate ideas with core features.

Scale

Develop scalable SaaS platforms with user management, subscriptions, analytics, and more.

Automate

Implement AI-powered agents to enhance user experience, automate tasks, and boost efficiency.

Audit

Perform a detailed system audit to find risks, inefficiencies, and areas for improvement.

Consult

Get expert consulting to define product strategy, architecture, and a clear growth path.

Why Choose a Digital accelerator?

Go-to-Market success is driven by Product development acceleration.

Set apart from your competition with off-the-rack turnkey solutions to fastrack your progress

At Ysquare, we assemble industry specific pathways with modular components to accelerate your product development journey.

WHYYsquare?

Our Engineering Marvels

Excellence in Numbers

7+

Years

50+

Skilled Experts

500+

Libraries & Frameworks

5k+

Agile Sprints

2M+

Humans & Devices

For our diverse clientele spread across India, USA, Canada, UAE & Singapore

Our Engagement Models

At Ysquare, we establish working models offering genuine value and flexibility for your business.

BUILD-OPERATE-TRANSFER

Retain your product expertise through seamless product & team transition.

Build your product & core team with us.

Accelerate product→market with proven processes

Focus on roadmap & traction with a managed team.

Ensure continuity through seamless transitions.

Protect product IP moving experts in your payroll.

RESOURCE RETAINER

Augment your team with the right skills & expertise tailored for your product roadmap.

Build your product in house with extended teams.

Accelerate onboarding of experts in a week or two.

Focus on roadmap with no payroll function worries.

Ensure continuity through seamless replacements.

Leverage ease on team size with a month’s notice.

LEAN BASED FIXED SCOPE

Build your product iteratively through our value driven custom development approach.

Build your product with our proven expertise.

Accelerate development with readymade components.

Focus on growth with no pain on product management.

Ensure product clarity with discovery driven approach.

Lean mode with releases at least every 2 months.

What Our

Clients Have

To Say

What Our Clients Have To Say

Creative Corner

Follow us on Ysquare's Knowledge Hub

No Clear AI Ownership: The Silent Reason Your AI Agents Keep Breaking Down

Your AI agent goes live. It works. Then three weeks later, something quietly goes wrong. Outputs start drifting. A workflow sends the wrong notification. A report pulls stale data. And when you ask who is responsible for fixing it, everyone looks at someone else.

That is not a technology problem. That is an ownership problem.

No clear AI ownership in organizations is one of the most overlooked readiness gaps in enterprise AI today. You can build the most sophisticated agent in the world, but if nobody is accountable for its outcomes, it will fail. Slowly. Quietly. Expensively. This piece is part of our AI Agent Readiness Series, and it addresses Sign 11 from the framework: No Clear Ownership. If you have been nodding along to other signs in this series, like scattered knowledge silently sabotaging your AI or multiple versions of truth killing your data decisions, this one will hit close to home.

What Does No Clear AI Ownership Actually Mean?

Let’s be honest. Most companies deploy AI agents with a lot of excitement and very little clarity on who owns what after go-live.

No clear AI ownership means there is no single person or team formally accountable for an AI agent’s performance, outputs, or continuous improvement. It is not about who built it or who approved the budget. It is about who wakes up at 7 AM when the agent starts sending customers the wrong information.

Here is what this typically looks like in practice:

- The IT team says it is a business problem once it is deployed.

- The business team says it is a technical issue when something breaks.

- The vendor says it is working as intended.

- Leadership is waiting for a report that nobody is writing.

When issues remain unresolved because nobody is responsible for AI outcomes, the damage compounds every single day. That is the real cost of unclear accountability.

It connects directly to other readiness gaps too. If your documentation does not reflect how work actually happens, then your AI agent is working from a broken map. And if nobody owns the agent, nobody updates that map either.

Why AI Accountability in Business Is Not Optional

There is a phrase that applies perfectly here: ownership drives accountability. Without it, you do not have AI-assisted operations. You have AI-assisted chaos with better branding.

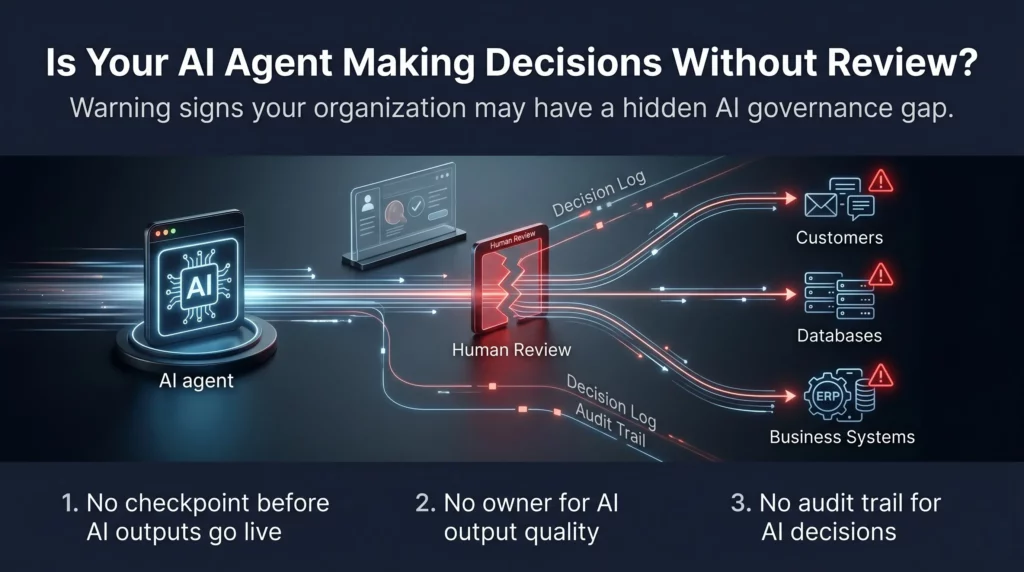

Think about what happens when an AI agent makes a wrong decision without a defined owner to catch it. If nobody validates outputs, mistakes can scale quickly. That is not a theoretical concern. In B2B environments where agents handle customer communications, data routing, or financial approvals, a single undetected error can trigger a cascade.

We covered the approval problem in depth in our piece on AI agents failing without an approval or review layer. But even a well-designed approval layer falls apart when no one is accountable for reviewing the reviews.

The real question is not whether your AI agent will ever make a mistake. It will. Every system does. The question is whether you have someone positioned to catch it, correct it, and prevent it from happening again. That person needs a title, a mandate, and the authority to act.

Primary keyword note: AI accountability in business is not a governance checkbox. It is the operating system that keeps your AI investments producing returns instead of producing liability.

The Real Cost of Undefined AI Accountability in Enterprise Teams

Let’s talk about what this actually costs you. Not in abstract terms but in operational reality.

1. Performance Degrades Without Anyone Noticing

AI agents are not static. Business context changes. Data sources evolve. Customer behavior shifts. Without an owner monitoring performance metrics, your agent keeps running on logic that was accurate six months ago and is quietly wrong today.

This connects directly to the measurement gap. When you are not tracking metrics for AI performance, you have no way to detect that your AI is underperforming until the damage is already done. Ownership without measurement is blind. Measurement without ownership is pointless.

2. Nobody Iterates. Performance Stagnates.

AI systems improve with feedback. That is not a nice-to-have. That is how they work. Without post-launch iteration driven by a named owner, your agent reaches a performance ceiling on day one and stays there.

We wrote about this specifically in the context of no post-launch iteration being a critical AI readiness gap. Without someone accountable for ongoing improvement, the agent becomes a legacy system the moment it goes live.

3. Conflicts Get Kicked Upstairs or Ignored

When your AI agent produces conflicting outputs across departments, someone needs the authority to resolve those conflicts. Without a defined owner, those conflicts sit in email threads and Slack messages for weeks. Meanwhile, the agent keeps producing wrong outputs at scale.

4. Security Gaps Go Unaddressed

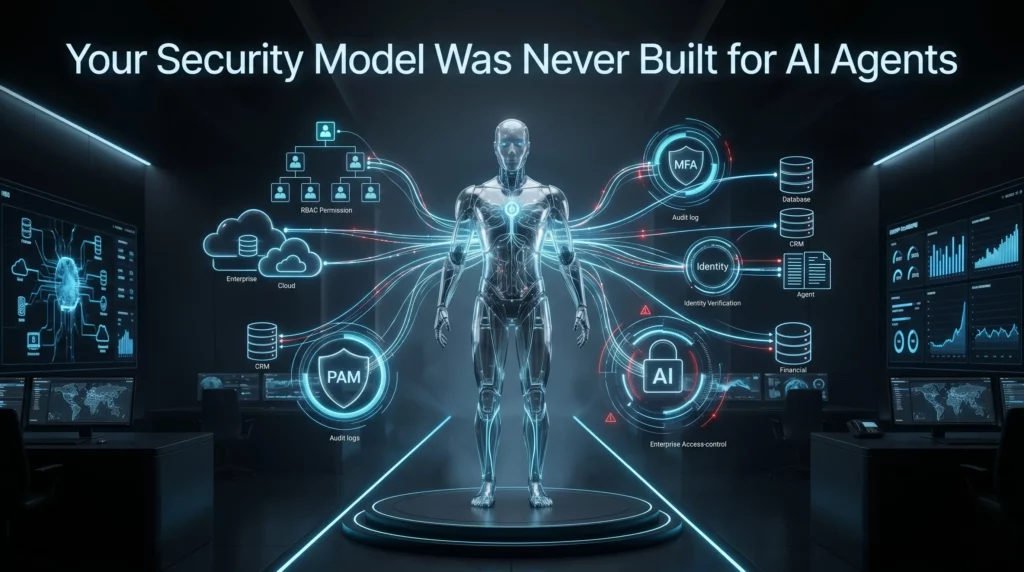

An AI agent operates differently from a human employee. It does not get tired, distracted, or hesitant. When it has access to sensitive systems and nobody owns it, the access permissions set at launch never get reviewed. We explored this in our piece on security systems built only for humans failing AI agents. The ownership gap and the security gap feed each other.

What Good AI Ownership Structure Looks Like

Good AI ownership is not about adding another title to your org chart. It is about clarity. Here is what a functional ownership model looks like in practice.

Name One Person Per Agent

Every deployed AI agent should have exactly one named owner. Not a committee. Not a shared inbox. One person who is accountable for its performance, its outputs, and its ongoing improvement. That person should be close enough to the business process to understand context and senior enough to make decisions without escalating every change.

Define the Scope of Ownership

Ownership without scope creates confusion. Your AI owner needs to know exactly what they are responsible for. That includes performance benchmarks, error thresholds, data quality standards, and escalation paths when something breaks down.

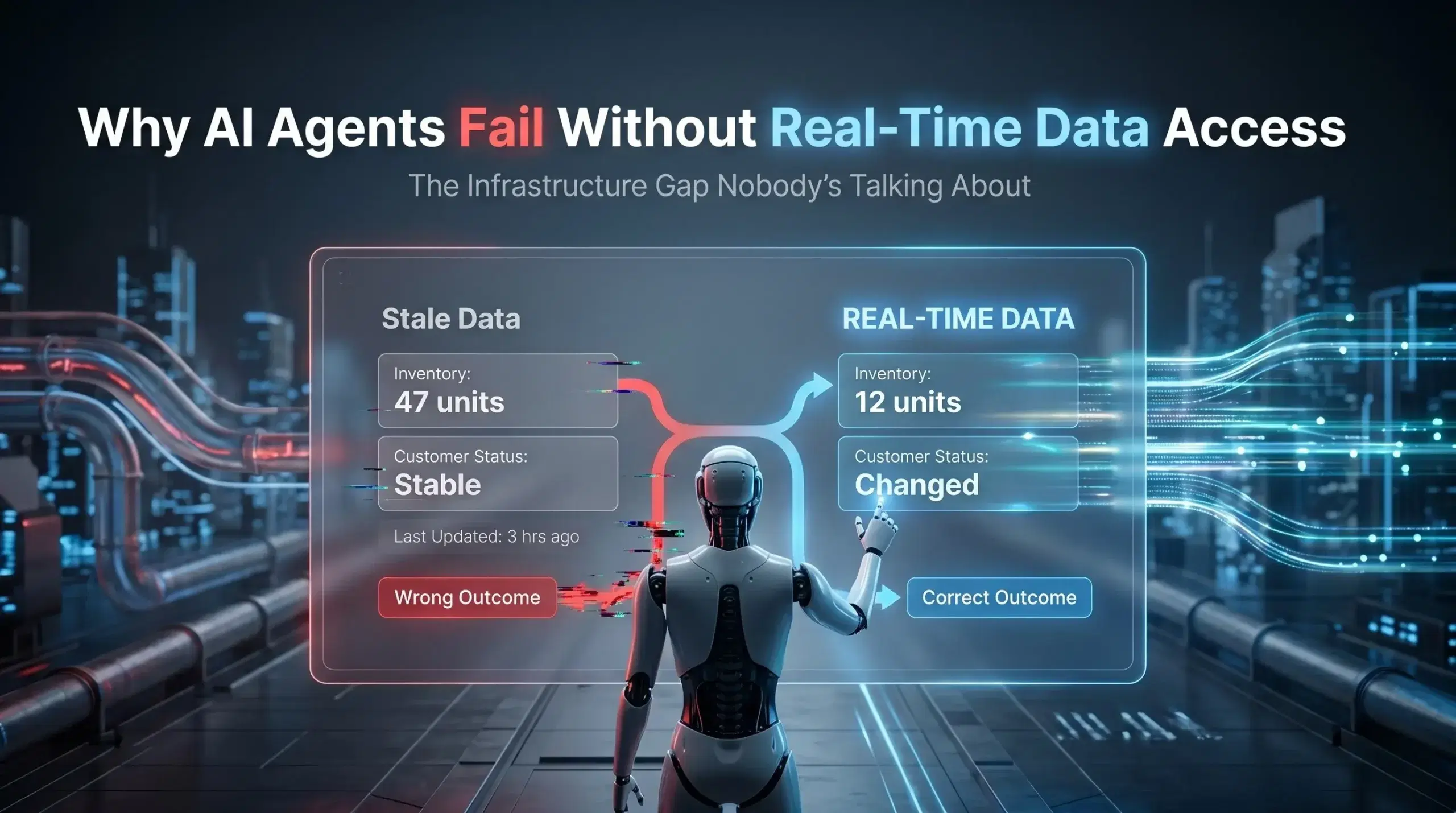

This connects to the broader problem of real-time data access being a hidden readiness gap. An AI owner needs to know whether the agent is accessing live signals or stale data. That is a scope question before it becomes a technical question.

Build In Review Cycles

An AI agent should have a monthly or quarterly performance review the same way a business unit does. The owner leads this review, brings in the right stakeholders, and makes the call on what needs to change. Without structured review cycles, ownership is just a label.

Connect Ownership to Leadership Buy-in

Here is the catch. Ownership only works when leadership actually supports it. If the C-suite treats AI agents as a one-time deployment instead of a living system, your AI owner will be fighting a constant uphill battle. We covered this in our piece on leadership not driving AI adoption as a critical readiness failure. Adoption starts at the top. So does accountability.

How No Clear Ownership Connects to Other AI Readiness Gaps

Ownership is not an isolated problem. It sits at the intersection of almost every other AI readiness gap.

When you have multiple versions of truth creating conflicting data, an AI owner is the person who decides which version the agent trusts. Without that owner, the agent picks arbitrarily and nobody questions it.

When your documentation does not match how work actually happens, the owner is the person who ensures the agent is updated to reflect real processes, not documented ones.

When real-time data access is blocked or incomplete, the owner escalates that dependency and ensures the agent is not making decisions on outdated signals.

And when knowledge is scattered across silos and tools, the owner maps those silos and ensures the agent knows where to look.

The AI owner is, in effect, the connective tissue between your AI investment and the real business it is supposed to serve.

Steps to Fix the AI Ownership Gap Starting This Week

You do not need a six-month governance program to fix this. You need a few clear decisions made this week.

- Audit your deployed agents. List every AI system currently running in your organization. For each one, write down one name next to it. That person is the interim owner starting today.

- Define what ownership means. Create a one-page ownership charter per agent. Include performance KPIs, review frequency, escalation contacts, and change authority.

- Get a leadership sponsor. Every AI owner needs a leadership sponsor who will remove blockers and ensure the ownership role is respected cross-functionally.

- Set a 90-day review. Within 90 days of assigning an owner, conduct a formal performance review of the agent. This creates the first feedback loop and tests whether ownership is working.

- Tie ownership to outcomes. The AI owner should be measured on the outcomes the agent is supposed to deliver, not on whether the agent is running. Running is not the same as performing.

Is Your Organization Ready to Own Its AI Agents?

Most organizations are not. That is not a criticism. It is just the reality of where enterprise AI adoption is right now. The technology has moved faster than the organizational structures needed to govern it.

The good news is that this is one of the most solvable readiness gaps. It does not require new technology. It does not require a massive budget. It requires a decision: who owns this?

Make that decision for every AI agent you currently have running. Then make it mandatory before every future deployment. It sounds simple because it is. The complexity is in building the organizational culture where ownership is respected, supported, and measured.

If you are serious about AI agent readiness, start with our full readiness framework on the Ysquare Technology LinkedIn page. Each sign in the series connects to the others, and ownership is the thread that runs through all of them.

Final Thought: Ownership Is Not Bureaucracy. It Is How AI Scales.

Every time an AI agent fails quietly in a corner of your organization, it erodes trust in AI as a whole. Teams stop using it. Leadership pulls funding. The technology gets blamed when the problem was always structural.

Defining clear AI ownership is how you prevent that. It is how you build AI that improves month over month instead of decaying from launch day. It is how you turn a one-time deployment into a competitive advantage that compounds over time.

The question is not whether your AI can do the job. The question is whether your organization is structured to support it. Start with ownership. Everything else gets easier from there. And if you want a full picture of where your AI readiness stands today, explore our growing series covering all 15 signs, beginning with how scattered knowledge blocks AI agent performance.

Read More

Ysquare Technology

09/06/2026

No Post-Launch Iteration: The Silent Reason Your AI Agents Stop Improving

You spent months building your AI agent. The demo worked beautifully. Leadership approved the rollout. And then you launched. That was six months ago. Here is the question nobody in your organization is asking: is that agent actually getting better?

Most of the time, the honest answer is no. Not because the technology failed, but because the team moved on. There is a deeply ingrained assumption in enterprise AI deployments that launch is the finish line. It is not. Launch is where the real work begins. And skipping the post-launch iteration phase is one of the most expensive mistakes organizations make with AI agents today.

This is part of a broader pattern we have been tracking across enterprise AI readiness. If you have already read about how scattered knowledge silently sabotages your AI agents, you will recognize the theme: the problems that kill AI agent performance are rarely about the model itself. They are, instead, about the organizational infrastructure around it. And no post-launch iteration is one of the most overlooked gaps of all.

The Production Reality

The Composio AI Agent Report 2025 found that 67% of organizations report measurable gains from agent pilots, yet only 10% successfully scale to production. The gap does not sit in the technology. It lives, instead, in what happens, or more accurately what does not happen, after the agent goes live.

What No Post-Launch Iteration Actually Means for Your AI Agents

Let us be clear about what we are talking about. Post-launch iteration for AI agents is the ongoing process of monitoring real-world performance, collecting feedback, identifying failure patterns, and making targeted improvements. In other words, it is the cycle that turns a static deployment into a system that learns and compounds value over time.

Without it, your AI agent becomes frozen at the capability level it had on launch day. That is a serious problem, because the world around it does not stay frozen. Business processes shift, data patterns change, user needs evolve, and edge cases multiply. As a result, what performed well in testing starts encountering situations it was never prepared for in production.

The degradation is rarely dramatic, which is precisely what makes it so dangerous. A real-world case documented by SaaStr describes a team that deployed an AI agent, watched it perform well, and then moved on to other projects. Four months later, the agent had quietly stopped ingesting new data. Moreover, it kept running and kept producing outputs that looked plausible, but was operating entirely on stale information. The team only caught it when results started feeling slightly off. Not wrong enough to trigger alarms. Just a little out of step with reality.

This is the operational signature of an AI agent with no iteration loop. Rather than crashing visibly, it just slowly stops being useful.

Furthermore, the same dynamic is explored in depth in our LinkedIn article on why post-launch iteration is the silent reason your AI agents underperform, which looks at how this pattern shows up across enterprise deployments of every size.

Why AI Agent Performance Stagnation Is Now a Business Risk

The scale of the problem is becoming impossible to ignore. According to a June 2025 Gartner press release, over 40% of agentic AI projects will be canceled by the end of 2027, with escalating costs, unclear business value, and inadequate risk controls as the primary reasons. What does inadequate risk control look like in practice? Often it looks exactly like an agent running in production with no feedback loop and no mechanism for improvement.

McKinsey’s 2025 State of AI report reinforces the picture: fewer than 20% of AI pilots scale to production within 18 months, and only 39% of organizations report any enterprise-level EBIT impact from AI. Consequently, the organizations that are generating real returns are not necessarily the ones with the best models. They are the ones that have built processes for continuous improvement after launch.

Beyond that, research from Lemma, a YCombinator F25 company building continuous learning infrastructure for AI agents, found that agent performance can drop approximately 40% within weeks of deployment. This happens as real-world input drift introduces user behaviors and edge cases that were not present in testing. That is not a model failure. That is a process failure, and it is entirely preventable with the right iteration infrastructure in place.

The Compounding Cost

High-volume agents processing thousands of transactions daily see measurable accuracy improvements within 30 to 45 days when a feedback loop is active. Without one, however, performance flatlines or silently degrades from day one. The longer you wait to implement iteration, the more ground you have to recover.

The Five Ways No Post-Launch Iteration Damages AI Agent Readiness

Understanding the specific mechanisms of performance stagnation helps you make the case internally for why iteration infrastructure is not optional. Here are the five most common patterns we see.

1. Distribution Shift Goes Undetected

Your agent was trained and tested on a specific snapshot of your business data. The moment it goes live, however, the real world starts diverging from that snapshot. New product lines, updated workflows, seasonal demand shifts, and new customer segments all push the agent away from its original frame of reference. Distribution shift is the technical term for this divergence, and without continuous monitoring, it remains invisible until the agent starts making decisions that feel wrong but are hard to explain.

The connection to your broader data environment is critical here. If your organization already struggles with multiple versions of truth creating conflicting data across systems, distribution shift compounds that problem at speed.

2. Edge Cases Accumulate Without Resolution

No pre-launch test suite captures every real-world scenario. Edge cases are inevitable, and therefore the question is not whether your agent will encounter them but whether your organization has a mechanism for identifying, analyzing, and resolving them. Without an iteration process, those edge cases pile up and are never addressed. Each one represents a user who received a wrong or unhelpful response. At scale, this erodes trust in ways that are very difficult to recover from.

3. Business Process Changes Outpace the Agent

Organizations are not static. Processes change, policies update, and teams restructure constantly. As a result, an AI agent trained on how your business operated six months ago becomes increasingly misaligned with how it operates today. This is especially dangerous when the agent is handling workflows that touch customers, finance, or compliance. We have covered the upstream version of this problem in our piece on undocumented workflows and AI automation failures. The same dynamic plays out post-launch when iteration is absent.

4. No Feedback Means No Learning Signal

Research from Dust’s continuous improvement framework is clear on this point: if there is no clear owner for an agent and no time allocated to iterate, agents simply do not improve. Feedback that is never collected cannot drive learning. In addition, many organizations have no structured process for gathering input from the people who interact with the agent every day, whether they are employees or customers.

Because of this, organizations that have no system for measuring AI agent performance after deployment are essentially operating blind. You cannot improve what you are not measuring.

5. Security and Compliance Drift

An agent that handled sensitive data appropriately at launch may not remain compliant as regulations evolve and your data environment changes. Security models built for static systems need regular review when applied to autonomous agents. This is not theoretical: the AI Incidents Database reports that AI-related incidents rose 21% from 2024 to 2025. Furthermore, many of those incidents involve agents that were operating outside their original governance parameters without anyone noticing.

For a detailed look at why security frameworks designed for human operators fail AI agents, our blog post on security models built only for humans creating AI agent vulnerabilities covers the specific gaps that post-launch monitoring needs to close.

How Post-Launch Iteration Actually Works in Practice

Here is the thing: building an iteration loop for your AI agent does not require a separate engineering team or a six-month project. It requires clarity about four things.

Continuous Monitoring with Automated Evaluation

You need a system that scores agent responses against accuracy, helpfulness, and task completion on an ongoing basis, not just in pre-launch testing. Leading evaluation frameworks now support LLM-as-a-judge scoring, where a secondary model reviews a sample of production outputs and generates quality scores. Performance is graphed over time, and alerts fire when quality degrades. As a result, you find out from a dashboard rather than from an angry user or a manager who noticed something felt off.

Structured Feedback Collection from Real Users

The people using your agent every day are your best source of iteration signal. Building a lightweight, structured mechanism for them to flag unhelpful or incorrect responses turns anecdotal frustration into actionable data. Fortunately, the feedback does not need to be complex. A simple thumbs-down with a category tag is enough to surface patterns.

Beyond flagging errors, your approval and review layer for AI outputs becomes a source of iteration data, not just a quality gate. Every human review generates a signal about where the agent’s judgment diverged from the expected outcome.

Targeted, Incremental Updates

The most common mistake in post-launch iteration is trying to overhaul the agent when a targeted edit would suffice. The Dust framework recommends starting with the top failure mode surfaced by your monitoring, making a surgical change to instructions, data sources, or parameters, testing with a small group, and then rolling out broadly. Small, targeted changes are easier to test and, equally important, easier to roll back if something breaks.

This is the iteration mentality that software engineering teams have applied for decades. AI agents deserve the same discipline. Ship, measure, learn, and improve. Then repeat.

Ownership and Accountability

No iteration loop survives without a named owner. Someone in your organization needs to be responsible for the agent’s ongoing performance, with time explicitly allocated to the iteration process. Without this structure, feedback goes nowhere and insights gather dust. This gap is directly linked to the leadership ownership gap that keeps AI agents underperforming across enterprises, a pattern our piece on leadership not driving AI adoption examines from the top down.

What Your AI Agent Ecosystem Looks Like Without Iteration

Let us paint the picture honestly. Six months after launch, an AI agent with no iteration process typically looks like this:

- Performance has plateaued or quietly declined from its peak at launch

- Users have developed workarounds for the edge cases the agent handles poorly

- Business process changes have introduced misalignments the agent has no way to know about

- The team that built the agent has moved on to the next project

- Nobody has a clear picture of what the agent is actually doing at scale

This is not a hypothetical. It is the operational reality for a significant portion of enterprise AI deployments today. The Composio 2025 report’s finding that only 10% of organizations successfully scale agent pilots to production reflects both a pre-launch problem and a post-launch one. Many organizations reach production and then fail to sustain it because there is no iteration infrastructure keeping the agent aligned with reality.

The data quality dimension makes this even more acute. If your agent is operating on real-time data access gaps that leave it working from outdated information, the absence of post-launch iteration means those gaps compound rather than get resolved. Consequently, the agent becomes increasingly disconnected from the current state of your business.

Building the Case for Post-Launch Iteration Internally

If you are a technology leader reading this, you likely already know the iteration gap exists in your organization. The challenge, however, is making the case for dedicated iteration resources in an environment where the initial deployment already consumed significant budget and attention.

Frame It as a Cost of Stagnation, Not a Cost of Iteration

Here is the framing that tends to land with business stakeholders. Your AI agent is a revenue or efficiency-linked system. Its current performance level represents a baseline, and therefore every week you do not iterate is a week you are leaving potential improvement on the table. Every edge case that accumulates represents a customer interaction or process step where the agent is actively failing. The cost of not iterating is not zero. It is the cumulative sum of all those missed improvements and unresolved failures.

Anchor to ROI Evidence

McKinsey data shows that organizations achieving real ROI from AI are not necessarily using better models. Instead, they are applying better operational discipline to the systems they have. The 5.8x ROI on AI investment within 14 months that McKinsey’s research documents is not achieved by deploying and forgetting. It is achieved by deploying, measuring, iterating, and compounding gains over time.

Include Documentation Teams in the Conversation

Beyond the commercial case, the technical teams building documentation for your agent also need to be part of this discussion. If your documentation does not reflect how AI agents actually make decisions in the field, iteration becomes much harder because you have no reliable baseline to measure against.

Practical Steps to Start Your Post-Launch Iteration Process Today

You do not need to wait for a perfect system. You need to start. Here is a practical sequence that works for organizations at every stage of AI maturity.

Step 1: Assign an Agent Owner

Name a single person responsible for the ongoing performance of each production AI agent. While this does not need to be a full-time role, it needs to be a named accountability. Without ownership, everything else in this list will fail to stick.

Step 2: Define Your Performance Baseline

Before you can track improvement, you need to know where you are starting. Pull your current task completion rates, user satisfaction signals, and error patterns. If you do not have this data yet, the first iteration sprint should focus on instrumentation: getting the logging and monitoring in place so you have something to measure against.

Step 3: Run a Weekly Feedback Review

Set a recurring thirty-minute review where the agent owner looks at the feedback and error data from the previous week. Identify the top failure pattern. Then make one targeted improvement, not a full rebuild. Test it, observe the impact, and repeat next week.

Step 4: Connect Your Iteration Loop to Your Data Infrastructure

The iteration process only works if the agent is operating on accurate, current data. If scattered knowledge across your organization is limiting what your AI agents can access, your iteration loop needs to include data quality improvements, not just prompt tuning.

Step 5: Make Iteration Part of Your AI Governance Framework

Finally, post-launch iteration should not be an informal practice that depends on individual initiative. It should be a documented process with scheduled reviews, defined metrics, and governance sign-off for significant changes. This is what turns a good AI deployment into a sustainable one.

The Real Question Is Not Whether to Iterate. It Is How Long You Can Afford Not To.

Here is a perspective shift worth sitting with. Every enterprise software system your organization depends on gets maintained, updated, and improved on a regular cycle. Nobody deploys a CRM or an ERP and then never touches it again. Yet that is exactly the treatment many organizations give their AI agents, and then they wonder why the results plateau.

AI agents are not set-and-forget tools. They are living systems that operate in changing environments and need ongoing attention to stay aligned with your business reality. Therefore, the organizations that will generate lasting ROI from AI are the ones building the discipline of continuous iteration into their deployment model from day one.

Gartner’s warning that over 40% of agentic AI projects will be canceled by end of 2027 is not a verdict on AI technology. Rather, it is a verdict on AI deployment practices. The technology works. The processes around it are, however, still catching up. Post-launch iteration is one of the places where closing that gap makes the most immediate difference.

If you are building AI agents at scale and want to make sure iteration is built into your readiness model from the ground up, connect with the Ysquare Technology team on LinkedIn to explore how we approach enterprise AI agent deployment with long-term performance in mind.

Read More

Ysquare Technology

05/06/2026

Why Leadership Must Drive AI Agent Adoption Across the Organization

Here is a question worth sitting with: Your company just spent six figures on AI tools. Your IT team built the pilots. Your vendor gave three onboarding sessions. And yet, six months in, adoption across the organization is hovering somewhere between “low” and “invisible.”

Sound familiar?

This is not a technology problem. It is not a budget problem. And it is definitely not a problem your IT team can fix on their own.

When leadership isn’t driving AI adoption, everything else you do to push it forward is just noise. Teams take their cues from the top. If they don’t see their managers, directors, and executives actively using AI, talking about AI, and holding people accountable to AI outcomes, then AI becomes just another initiative that will quietly fade away after the next quarterly review.

The data backs this up. McKinsey’s 2025 Workplace AI report surveyed 3,613 employees and 238 C-level executives and found that employees are ready for AI, but leaders are not steering fast enough. The biggest barrier to success is leadership.

That is not a small finding. That is the finding. And if you’re a CEO, CTO, or senior business leader, this one is squarely on your desk.

Why Leadership Isn’t Driving AI Adoption Is the Real Bottleneck

Most organizations frame AI adoption as a rollout problem. They build a roadmap, pick a vendor, set up training sessions, and wait for adoption to happen. It doesn’t. Because adoption isn’t a rollout problem. It’s a culture problem, and culture is set by leaders.

Think about how any new behavior spreads inside a company. People don’t change how they work because they attended a webinar. They change because they see their peers doing things differently, because their manager asks them different questions, and because their performance is measured against different outcomes. None of that happens without leadership actively driving it.

When executives treat AI as someone else’s responsibility, a few predictable things occur. Teams see AI as optional. Middle managers don’t prioritize it. Budgets get questioned at renewal time. And the early adopters who were genuinely excited burn out trying to evangelize uphill without any support.

McKinsey’s research shows that AI high performers are three times more likely to have senior leaders who demonstrate ownership of and commitment to their AI initiatives. Those same leaders actively use AI themselves and role-model the behavior they want to see across the organization.

That three-times multiplier isn’t marginal. It’s the difference between companies that are genuinely transforming and companies that are running expensive pilots forever.

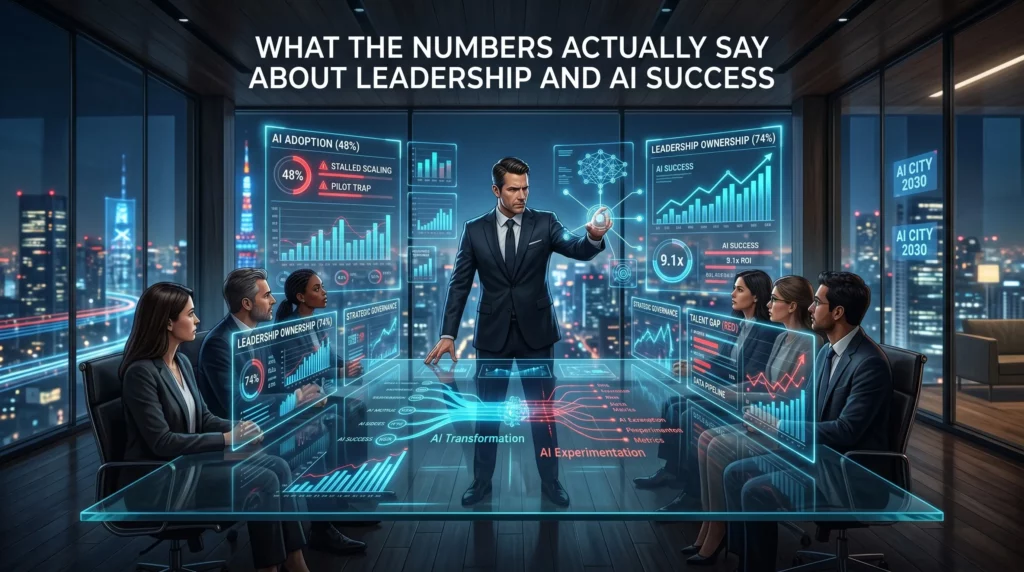

What the Numbers Actually Say About Leadership and AI Success

The statistics here are sobering, and leaders need to face them honestly.

According to McKinsey’s 2025 State of AI report, 88% of organizations reported regular AI use in at least one business function in 2025, compared with 78% a year earlier. But only about one-third have begun scaling AI programs across the organization. The gap between “we’re using AI somewhere” and “AI is changing how we operate” is enormous, and leadership behavior sits right in the middle of it.

A 2025 report from WRITER, which surveyed 1,600 knowledge workers including 800 C-suite executives, found that more than one in three executives describe their generative AI adoption as a “massive disappointment.” Two-thirds of C-suite leaders reported tension between IT teams and other business units around AI implementation.

Here’s the number that should alarm every board room: Only 28% of organizations report that their CEO takes direct responsibility for AI governance and oversight. Yet the companies where the CEO is directly involved in AI governance report meaningfully higher business impact from their AI investments.

The math is simple. When the CEO owns it, it gets resourced, prioritized, and measured. When AI is delegated to a single team, it gets stuck.

McKinsey’s March 2025 report, “How Organizations Are Rewiring to Capture Value,” reinforces this directly: only 28% of respondents whose organizations use AI say their CEO oversees AI governance, and CEO oversight is strongly correlated with higher self-reported bottom-line impact.

The IBM Watson Story: A Masterclass in What Happens Without Real Governance

No case study on AI adoption failure is more instructive than the story of IBM Watson for Oncology.

IBM positioned Watson Health as a moonshot. The technology would democratize elite oncology expertise, helping clinicians around the world make better cancer treatment decisions. IBM committed billions of dollars. The marketing was confident. The promise was enormous.

What actually happened was a governance and leadership failure at scale.

The system was developed with training data curated by a small group of physicians using hypothetical patient cases, not real clinical data. When hospitals tried to deploy it in the real world, the recommendations were often inconsistent with national treatment guidelines. One physician at a Florida hospital told IBM executives the system was “worthless” for most cases, and that the hospital had bought it largely for marketing purposes.

When MD Anderson Cancer Center, one of Watson’s most prominent partners, transitioned from its legacy EHR system to Epic Systems, Watson couldn’t access live patient data. A $62 million investment became, in the words of one review, a “custom demo.”

By 2022, IBM announced the sale of Watson Health’s healthcare data and analytics assets to Francisco Partners. Financial terms were not officially disclosed, though reports placed the deal at more than $1 billion, a figure widely understood to represent a fraction of the total capital invested in acquisitions, development, and deployment across the life of the program.

The core failure wasn’t the technology itself. As researchers and analysts have since noted, the problem was structural and organizational. IBM’s leadership scaled the product before the conditions for it to work were established. There was no rigorous governance to catch the gap between what was being promised externally and what was actually possible internally. Clinical experts weren’t embedded deeply enough. The business case was built on narrative rather than evidence.

This is precisely what happens when AI adoption is treated as a product launch rather than as an organization-wide capability change that requires sustained leadership ownership at every level.

Source: Henrico Dolfing Case Study Analysis, December 2024

What Leaders Actually Need to Do Differently

The answer to “leadership isn’t driving AI adoption” isn’t to send another memo or mandate a new tool. It is to change behavior, specifically leadership behavior, in visible and consistent ways.

Here’s what that looks like in practice.

Use the tools publicly. When a CEO shares that they used AI to prepare for a board meeting, or a VP mentions in a team call that they ran a prompt to summarize competitive research, those small moments signal that AI is real, not aspirational. Visibility matters enormously.

Ask AI-related questions in reviews. If the only metrics being reviewed are the same ones from two years ago, nothing changes. Leaders who ask “how did we use AI to get this result?” or “where did AI save us time this quarter?” are reshaping what the team pays attention to.

Assign explicit ownership. Not a committee. Not a shared responsibility. One named person whose job includes making AI adoption work, with a budget, a timeline, and reporting lines directly into leadership. As our analysis of why leadership must drive AI agent adoption shows, the moment there is no single owner, accountability evaporates.

Remove the barriers teams face. Most frontline employees aren’t anti-AI. They’re time-poor, risk-averse, and waiting for permission. Leaders need to create psychological safety around experimentation, reduce the bureaucratic friction around tool access, and make it easy to try things without fear of looking incompetent.

Tie AI outcomes to performance conversations. What gets measured gets done. When teams know that AI capability building is part of how they are evaluated, they prioritize it.

The Readiness Problem Leaders Keep Ignoring

Leadership behavior is only one part of the equation. Even the most committed executive can’t drive adoption if the organization’s infrastructure isn’t ready for AI agents to work.

This is a critical point that gets skipped in most leadership conversations about AI.

Your AI agents are only as reliable as the data and systems they operate in. If knowledge is scattered across tools and teams, agents won’t find what they need. We cover this challenge in depth in our piece on why scattered knowledge is silently sabotaging your AI, and in our blog on scattered knowledge and AI agent readiness.

If your documented processes don’t reflect how work actually happens, agents will make decisions based on outdated or wrong information. This is explored in our piece on what happens when your documentation lies, and in our undocumented workflows blog.

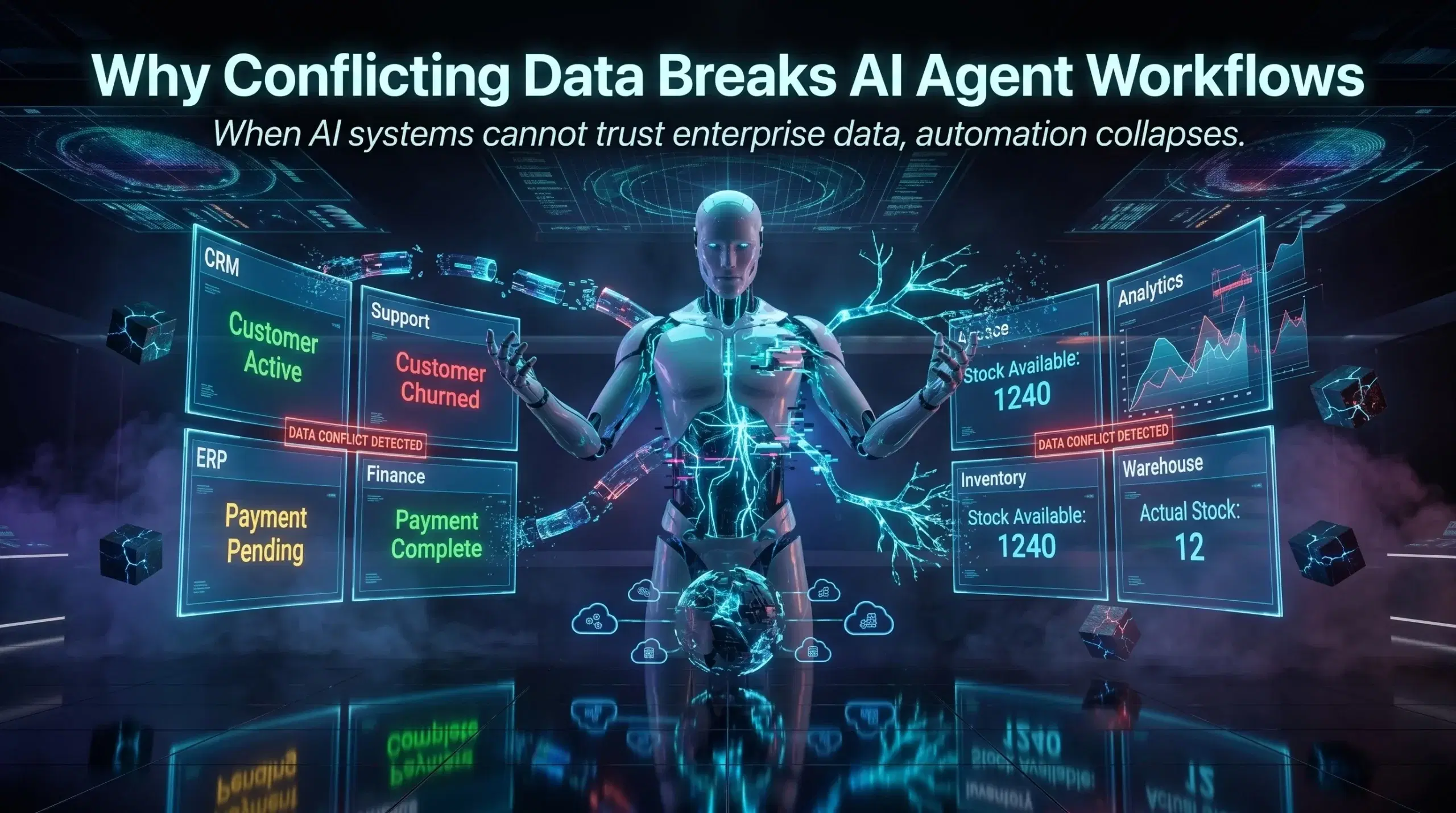

If different teams are working from different versions of the same data, the conflict kills AI decision quality before it even starts. Our article on multiple versions of truth and why conflicting data kills your AI makes this concrete, and our blog on multiple versions of truth walks through the fix.

If agents can’t access real-time data, every decision they make is already stale. We break this down in why real-time data access is the hidden reason your AI agents stall and in our blog on AI agents failing without real-time data access.

And if there are no approval or review layers, no metrics for performance, and security systems that were designed for humans rather than autonomous agents, you’re not just slowing adoption down. You’re creating risk. These exact gaps are covered in our deep dives on AI agents with no approval or review layer, security built only for humans, and no metrics for AI performance.

Leaders who genuinely want to drive AI adoption have to ask: are we actually ready for agents to operate here? Or are we trying to drive on a road that hasn’t been built yet?

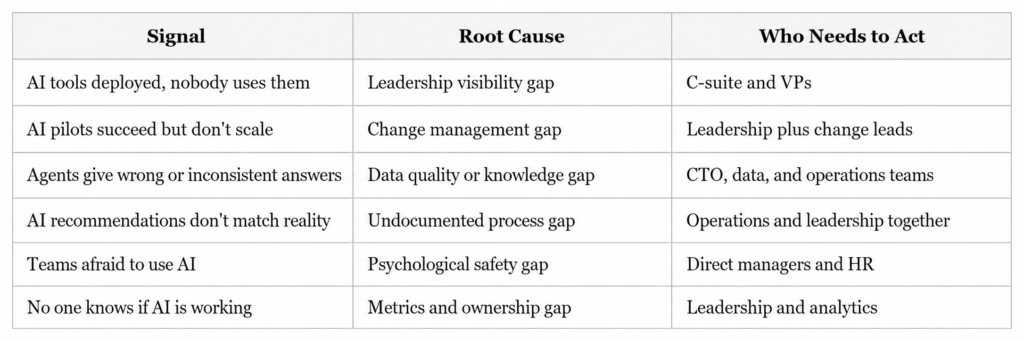

The Leadership Gap vs. The Readiness Gap: A Practical Framework

Understanding both gaps helps you prioritize the right interventions. Here is a simple way to think about where your organization stands.

Most organizations have problems in multiple columns at once. The common thread is that none of these get fixed without leadership actively identifying the problem, naming it publicly, and committing resources to solve it.

Three Questions Every Leadership Team Should Answer This Quarter

If you’re serious about closing the gap between “we have AI” and “AI is working for us,” start with these three questions in your next leadership session.

One: Where is AI visibly showing up in our leadership behavior? Not in slides. In actual day-to-day decisions, communications, and reviews. If the honest answer is “not really anywhere,” that’s where to start.

Two: Who owns AI outcomes across this organization? Not IT. Not a vendor. A named individual with authority, accountability, and a direct line to leadership. If you can’t answer this in thirty seconds, ownership doesn’t exist.

Three: What does success look like in ninety days? Not annual ROI projections. A concrete, measurable outcome that proves the investment is moving in the right direction. If there’s no near-term success metric, there’s no accountability loop.

These aren’t complicated questions. But they require an honest conversation that many leadership teams keep avoiding because they’re busy and because the status quo feels comfortable.

The status quo, meanwhile, is getting more expensive every quarter.

What High-Performing Organizations Do Differently

McKinsey’s research identifies a consistent pattern among AI high performers. They’re not necessarily the companies with the biggest budgets or the most sophisticated technology. They’re the companies where senior leaders demonstrate visible ownership of AI initiatives, actively use AI themselves, and role-model the adoption behavior they want to see.

These organizations treat AI not as an IT capability but as a business capability. The difference in framing changes everything: who owns it, how it’s resourced, how progress is measured, and how it’s talked about internally.

They also do something that most organizations skip. They redesign workflows rather than bolting AI onto existing ones. Leaders at these companies are willing to ask harder questions about how work actually flows, where decisions get made, and what needs to change structurally for AI to deliver real value.

That kind of organizational introspection doesn’t happen at the team level. It requires leadership to drive it.

Conclusion: Adoption Starts at the Top, Not at the Tool

There’s a version of this story that ends well, and a version that doesn’t. The difference isn’t the quality of the AI tools, the size of the implementation budget, or the enthusiasm of the early adopters.

The difference is whether your leaders treat AI as someone else’s problem or as their own.

When leadership isn’t driving AI adoption, you get pilots without scale, investments without returns, and teams that quietly go back to doing things the way they always have. When leadership does drive it, you get the 3x performance multiplier McKinsey observed. You get teams that feel permission and urgency to change. You get an organization that actually transforms.

The infographic above puts it plainly: “If leaders don’t actively use AI, teams won’t prioritize it. Adoption starts at the top.” That’s not a motivational phrase. That is an operational truth backed by the data.

Your next move is not another pilot. It’s a leadership conversation about ownership, visibility, and accountability. Start there, and everything else becomes easier.

Ready to Assess Your AI Agent Readiness?

At Ysquare Technology, we help enterprise and growth-stage companies identify exactly where their AI adoption is breaking down and what leadership, data, and infrastructure changes are needed to fix it.

If your AI investments aren’t delivering what you expected, the problem is almost certainly upstream of the technology. Let’s find it together.

Connect with us on LinkedIn or visit www.ysquaretechnology.com to start the conversation.

Read More

Ysquare Technology

01/06/2026

AI Performance Metrics: Why Your AI Is Losing Money

Most leaders think deploying AI is the hard part. It is not. Running AI without any way to measure whether it is actually working, that is the hard part. And right now, a startling number of organizations are doing exactly that.

Here is what most people miss: deploying an AI agent without performance metrics is not neutral. It is a slow bleed. Every day the system runs without measurement, errors go undetected, costs drift upward, and the gap between what you expected and what you are getting quietly widens. By the time someone notices, the damage is already embedded in your operations.

This article is for CEOs, CTOs, and technology leaders who are serious about getting real business value from AI, not just deploying it and hoping for the best. If your AI agents are live but you cannot answer the question “Is this working and how do we know?”, keep reading. We are going to change that.

Why “No Metrics for AI Performance” Is Sign Number Eight on the AI Readiness Watchlist

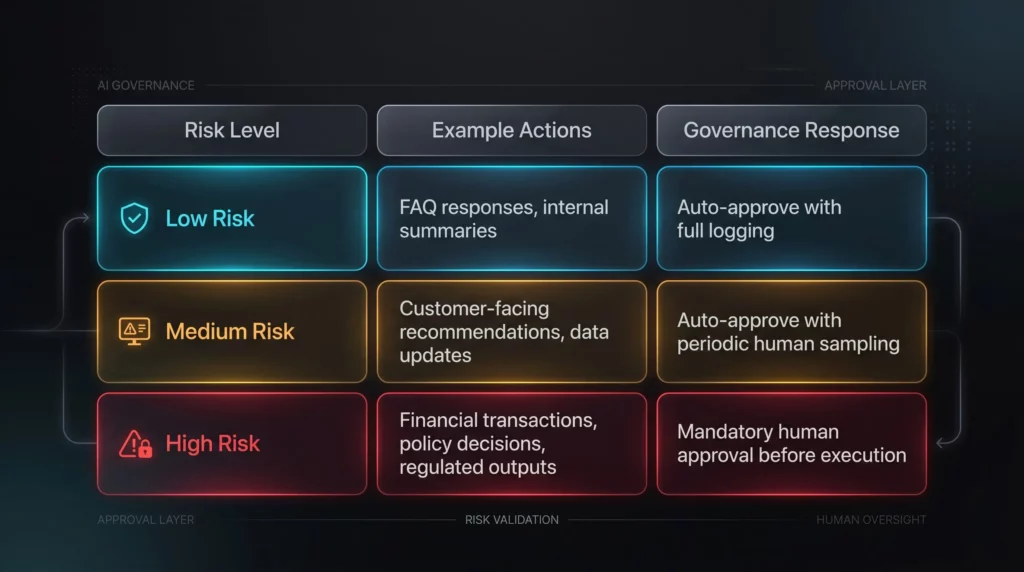

When we talk about the 15 signs your organization is not ready for AI agents, the absence of AI performance metrics sits at number eight for a reason. It sits squarely in the middle because it is the hinge. Everything before it, from scattered knowledge and undocumented workflows to poor data quality and no approval layers, creates conditions where AI fails. But without measurement, you never know which of those failures is happening, or how badly.

The phrase “what gets measured gets optimized” sounds like a motivational poster. In AI operations, however, it is a survival principle. Without a measurement layer, your AI agent has no feedback mechanism. It cannot improve because nothing tells it, or you, when it is wrong. Mistakes that a human reviewer would catch in a traditional workflow scale silently through automated systems until they surface as a business problem rather than an AI problem.

This is the real danger. Not that your AI will fail dramatically on day one. But that it will fail quietly, incrementally, across thousands of interactions, and you will have no idea until the downstream consequences surface in your P&L, your customer satisfaction scores, or your compliance audit.

What the Data Actually Says About AI Measurement

The numbers here are genuinely alarming. Moreover, they deserve to be seen clearly rather than buried in footnotes.

McKinsey’s research confirms that fewer than 20% of organizations track well-defined KPIs for their GenAI solutions. That means more than four out of five organizations are running AI without a structured measurement framework. According to the same research, scaling AI without defined metrics is consistently cited as the primary reason AI programs stall out before they deliver value.

Gartner’s AI Maturity Survey found that only 63% of high-maturity organizations, the ones already considered advanced in AI adoption, run financial risk analysis, ROI analysis, and measure customer impact in any structured way. Think about what that means for organizations still in earlier stages of the journey.

Deloitte’s State of GenAI 2024 report found that 41% of business leaders openly admit they struggle to measure AI’s impact on their operations. IBM’s ROI of AI Report, conducted by Morning Consult, put the positive ROI figure at just 47%. More than half of companies investing in AI cannot confirm they are seeing returns.

McKinsey’s Superagency in the Workplace report found that 92% of companies plan to increase their AI investments over the next three years, while only 1% of leaders describe their companies as mature in AI deployment. The message is clear: AI investment is accelerating, but AI operating maturity is still far behind.

This is not an AI problem. It is a management problem. And it is one that can be fixed.

What “No AI Performance Metrics” Actually Looks Like Inside an Organization

It rarely looks like chaos. That is part of what makes it so hard to catch. Here is what it actually looks like day to day.

Your dashboards show activity, not outcomes. You can see how many tasks the AI agent processed, how many queries it responded to, how many workflows it touched. What the dashboard does not show is whether any of that activity produced a better result than what you had before. Volume is not value.

Improvement happens by accident when it happens at all. Without baselines and benchmarks, you have no way to distinguish a genuine performance gain from random variance. Your AI might get better over time, or it might quietly degrade. You will have no way to tell the difference until something breaks loudly enough to notice.

The AI team and the business team are measuring different things. Engineers track uptime, latency, and model accuracy. Business leaders track revenue, customer satisfaction, and operational costs. With no shared measurement framework, these two groups are essentially working on different problems and calling them the same project.

Errors compound before anyone catches them. This connects directly to the risk of running AI without an approval or review layer in your workflows. If you want to understand how unreviewed AI outputs scale into operational risk, the breakdown of what happens when no approval or review layer exists in your AI setup makes the connection concrete. Without metrics, you cannot see errors accumulating. Without a review layer, you cannot stop them from spreading.

The IBM and MD Anderson Case Study: A Sixty-Two-Million-Dollar Lesson in Missing Metrics

When people ask for a real-world example of what it costs to run AI without a clear measurement and validation framework, this is the one that belongs in every boardroom conversation.

IBM and MD Anderson Cancer Center partnered to build the Oncology Expert Advisor, a Watson-powered advisory tool designed to assist oncologists in clinical decision-making. The project was well-funded, medically ambitious, and backed by genuine intent to improve patient care. A prototype was tested in the leukemia department.

MD Anderson cancelled the project in 2016 after spending approximately sixty-two million dollars. As reported by IEEE Spectrum, the system never became a commercial product. The project ran into serious difficulties with the realities of clinical data, including the complexity of electronic health records, validation challenges, and the absence of clear performance checkpoints that would have allowed teams to catch integration problems early and course-correct before costs escalated.

The lesson is not that AI cannot work in healthcare. It absolutely can, and does. The lesson is that high-stakes AI needs clear success criteria, clinical validation standards, integration readiness checks, and measurable performance milestones before it moves toward production deployment. Without those checkpoints built in from the start, you have no mechanism to identify failure until the budget is already spent.

Source: IEEE Spectrum, “IBM Watson, Heal Thyself: How IBM Overpromised and Underdelivered on AI Health Care.”

The AI Performance Metrics That Actually Move the Needle

Here is where most measurement frameworks go wrong. They measure what is easy to pull from a system log rather than what tells you whether the AI is creating business value. Let us fix that.

Accuracy and Quality Metrics

First, you need to know whether the AI is producing correct, useful outputs. The most practical ones to track are task completion rate (did the agent finish what it was asked to do), recommendation acceptance rate (when the AI suggests something, how often do humans agree it was right), and error rate per thousand interactions. Furthermore, if your AI is producing outputs that humans routinely override or correct, that pattern is itself a critical data point.

Efficiency Metrics

Beyond accuracy, efficiency metrics connect AI activity directly to cost and speed. Compare average handling time before and after AI deployment on the same process. Track cost per task completed. Measure the ratio of AI-resolved interactions to human-escalated ones. As a result, you will know quickly whether the AI is automating volume while also increasing cost per unit, which happens more often than most leaders expect.

Business Impact Metrics

These are, ultimately, the ones that justify the budget conversation. How much revenue has AI-assisted decisions influenced? What has happened to customer satisfaction scores in workflows the AI now touches? Are operational costs in targeted areas trending down or up? In short, these metrics transform AI from an IT project into a business strategy.

Risk and Safety Metrics

Finally, risk and safety metrics are consistently the most overlooked category. Track the rate at which AI-generated outputs require human correction after the fact. Monitor escalation volumes for signals that the AI receives requests outside its reliable range. Run regular compliance checks on AI-involved decisions. These metrics are your early warning system, and without them, you are operating blind.

If your data quality is inconsistent across systems, all of these metrics will be unreliable at the source. This is why addressing multiple versions of truth in your data is not a separate workstream from building an AI measurement framework. They are the same problem looked at from two angles.

Why Most AI Measurement Frameworks Fail Before They Start

Here is the catch that most implementation guides skip over. Building a metrics framework after deployment is significantly harder than building it before. And most organizations try to do exactly that.

By the time you realize you need measurement, your AI has already been running for weeks or months. You have no baseline to compare against. The teams closest to the pre-AI process have moved on to other priorities. Moreover, real-world inputs have already shaped the AI’s behavior in ways that teams never benchmarked, so there is nothing meaningful to measure improvement against.

This is why the measurement conversation needs to happen before go-live, not after. When you design the AI agent’s workflow, that is when you define success. What does this agent need to accomplish for this deployment to be worthwhile? Write it down in specific, measurable terms. That sentence becomes your first performance metric.

The other failure pattern is assigning measurement responsibility to nobody in particular. Metrics without owners are decoration. Someone on your team needs to own each KPI, report on it regularly, and have the authority to escalate when it moves in the wrong direction. If measurement is everyone’s responsibility, it will quickly become no one’s.

This connects to a broader readiness challenge around ownership in AI programs. The same dynamic that creates problems when no one owns AI outcomes at the strategic level plays out identically at the metrics level. Accountability has to be assigned, not assumed.

How to Build a Practical AI Performance Measurement Framework in Four Steps

You do not need a six-month consulting engagement to get started. Here is a practical sequence that works.

Step one: Define success before deployment. For each AI agent or workflow, write one to three specific statements that describe what success looks like. Keep them concrete. For instance, “The AI will resolve 65% of Tier 1 support queries without human escalation” is a success statement. “The AI will help improve customer service” is not.

Step two: Establish your baseline. Pull the current performance data for the process your AI is replacing or augmenting. How long does it take? How accurate is it? What does it cost? How satisfied are customers with the outcome? That data is your starting point for every future comparison.

Step three: Build measurement into the rollout schedule. Do not treat monitoring as an afterthought. Therefore, schedule weekly check-ins in the first month, moving to monthly reviews as performance stabilizes. Make AI performance a standing agenda item in your technology and operations reviews.

Step four: Assign ownership and act on the data. Every metric needs a named owner. Every review needs to end with a decision, whether to stay the course, adjust the AI’s configuration, escalate a data quality issue, or retrain on new inputs. Consequently, measurement only creates value when it drives action.

If you are finding that your AI agents struggle because of data fragmented across systems, the underlying problem of scattered knowledge silently sabotaging your AI is worth addressing alongside your measurement buildout. Metrics built on fragmented data will give you fragmented insights.

The Leadership Reality Check

Let us be honest about something. Metrics programs do not fail because the metrics are wrong. They fail because leadership does not review them consistently enough to create accountability.

Gartner’s research found that only 27% of executives have a comprehensive AI strategy, and just 20% believe their workforce is actually ready for AI at scale. As a result, that gap in strategic preparedness shows up most visibly in measurement. When leadership is not looking at AI performance data, no one below them will treat it as a priority either.

If you are a CTO or CIO reading this, the most direct thing you can do to accelerate your AI measurement maturity is put AI performance metrics in your regular business reviews. Not as a technology report. As a business report. Accuracy rates, cost per task, escalation volumes, and business outcome trends sitting in the same review as revenue and customer satisfaction. That framing changes how every team in the building thinks about AI accountability.

In addition, if your AI agents operate without real-time data, the measurement challenge becomes even harder because your AI outputs outdated information before it ever reaches a decision-maker. The full picture of why AI agents fail without real-time data access is a related read that fills in this gap.

From Measurement to Continuous Improvement

The point of tracking AI performance metrics is not to generate reports. It is to create a closed loop where your AI system gets progressively better over time.

High-maturity AI organizations understand this well. Gartner’s research found that 45% of organizations with strong AI maturity keep their AI initiatives in production for three or more years, against just 20% of low-maturity organizations. The difference is almost never the sophistication of the initial model. Instead, it is whether the organization has the measurement and iteration infrastructure to keep improving after launch.

The loop looks like this: deploy with defined success criteria, measure against them, identify the gap between actual and target performance, adjust, and measure again. That cycle, repeated consistently, is what separates AI programs that deliver compounding value from those stuck permanently in pilot phase.

Without performance data, however, this loop cannot close. You cannot adjust what you cannot see. And if your documentation of how those workflows are supposed to run does not match how they actually run, your measurement baseline rests on false assumptions. The full picture of what happens when your documentation lies about how work actually gets done explains why this matters before you build any measurement framework.

The Connection Between Measurement and Every Other AI Readiness Challenge

Here is what most people miss when they think about AI performance metrics as a standalone issue. Measurement does not fix your AI readiness gaps in isolation. Rather, it makes every other gap visible.

Poor data quality shows up immediately in your accuracy metrics. They will start reflecting noise before you even realize the source of the problem. Beyond accuracy, if your AI agents are relying on conflicting data across multiple systems, inconsistent outputs will show up in your error rates as well. Processes buried in people’s heads rather than documented anywhere cause your AI’s task completion rate to plateau at a frustratingly low ceiling. Similarly, a security model built only for human users and not for autonomous agents will cause your risk metrics to flash warnings before your security team even identifies the source.

This is why measurement is the pivot point in the AI readiness journey. Not because it solves everything, but because it makes everything else solvable. You cannot fix what you cannot see. And right now, most organizations cannot see nearly enough.

The connection between real-time data access and measurement accuracy is also worth calling out explicitly. If your AI agents are acting on data that is hours or days out of date, the actions they take will look correct in the moment and incorrect in the outcome. Understanding why real-time data access is the hidden reason AI agents struggle will save you from building measurement frameworks on top of a stale data problem.

And if your workflows are undocumented and buried inside individual employees, your AI agent will hit invisible walls that your metrics will expose but that your team will struggle to diagnose without better process documentation.

Conclusion: The AI You Cannot Measure Is the AI You Cannot Trust

Here is the real shift in thinking we want to leave you with. Measurement is not a reporting function. It is a trust function.

You cannot trust an AI system you cannot measure. You cannot justify continued investment in something you cannot prove is working. And you cannot build organizational confidence in AI adoption when the people closest to the work have no visibility into whether the AI is helping or hurting.

The good news is that this is one of the most actionable AI readiness gaps on the list. You do not need a perfect framework on day one. You need clear success criteria, an honest baseline, a consistent review cadence, and named owners for each metric. Start there, and build from it.

At Ysquare Technology, we help organizations design and deploy AI agents with the measurement infrastructure built in from the start, not bolted on after the problems show up. If your AI is running without metrics, or your metrics are tracking the wrong things, we can help you build a framework that connects your AI performance directly to business outcomes.

Connect with us on Ysquare Technology’s LinkedIn page or visit ysquaretechnology.com to start the conversation. Your AI is either getting better every week or quietly drifting. Measurement is how you make sure you know which one is happening.

Read More

Ysquare Technology

25/05/2026